Part 1: Chapter-by-Chapter Logical Mapping

Introduction: Stop Thinking Like an Artist

Core Claim: The path to music success isn’t better music—it’s a mindset shift from artist (product) to curator (distributor). Building a successful playlist ecosystem allows your music to be discovered organically rather than promoted desperately.

Supporting Evidence:

Author’s struggle: Released 150+ songs, built expensive studio, social media screamed into void

Turning point: Built “Mood Lifting Happy Songs” playlist from 957 → 2,500+ followers

Metrics: 374 monthly listeners with 3.83 average streams per listener (high engagement)

Validation: Major label (Zix Music) commissioned remix based on curation success, not artist fame

“The problem wasn’t my music. I knew it was good. The problem was my mindset.”

Logical Method: Contrast structure. Struggling artist (product-focused) vs. successful curator (value-focused). The shift from “please listen to my song” to “I’ve created an experience for you.”

Gaps/Assumptions:

150 songs released with no traction: Were they all good? Or is Batushi’s assessment (”I knew it was good”) ego-protective rather than objective?

Causation uncertainty: Did the playlist strategy cause growth, or did he finally make better music during this period?

Sample size: One person’s success story. No data from others using this method.

Scale ambiguity: 2,500 playlist followers is modest. Many playlists have 50K-500K+ followers. Is this really success or just improvement from baseline?

Label deal framing: “Zix Music commissioned me for a remix” - This is a work-for-hire gig, not a traditional record deal. Framing as “label deal” inflates the accomplishment.

Argumentative Structure: Problem (struggled despite good music) → Insight (wrong mindset) → Solution (curator approach) → Result (playlist growth + label recognition).

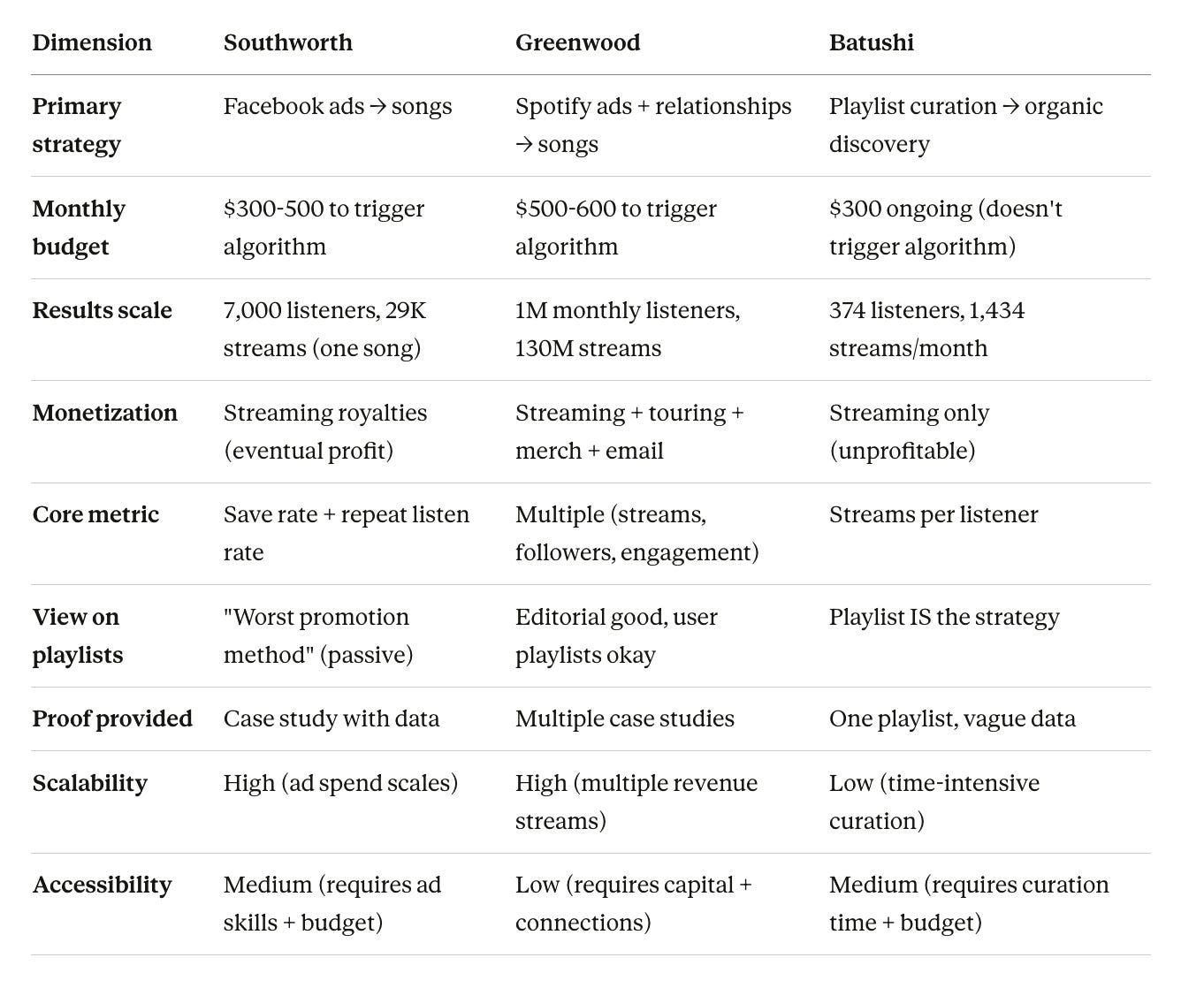

Comparison to Southworth/Greenwood:

Southworth: Facebook ads drive targeted traffic to music

Greenwood: Spotify ads + relationships drive traffic to music

Batushi: Playlist building drives organic discovery of music embedded within

Batushi’s approach is least direct, most indirect. He’s not promoting songs—he’s promoting playlists that contain songs.

Chapter 1: The Gapfiller Philosophy

Core Claim: Create and curate to fill emotional gaps in the musical landscape rather than following trends or competing for existing attention.

Foundation Story: Started at age 13 with 50s vinyl (Chuck Berry, Little Richard), absorbed energy and raw emotion. Music became “home.”

The Gapfiller Question:

As creator: “I don’t ask what’s popular. I ask what’s missing. What feeling? What specific summer vibe? What introspective thought doesn’t have its perfect soundtrack yet?”

As curator: “Does the world need another top 50 playlist? No, but does it need the perfect playlist for a quiet, happy Sunday morning? Absolutely.”

Artistic Philosophy: “You’re not competing. You’re completing the puzzle.”

Strategic Application: Define your playlist’s gap with “pinpoint accuracy” before adding a single song.

Logical Strength: The gapfiller framing reorients creative purpose from market competition to market completion. Instead of “beat existing songs,” aim for “create what doesn’t exist yet.”

Gaps/Assumptions:

How do you identify gaps?: Batushi describes the philosophy but not the methodology. How does artist know which gaps are unfilled vs. which are unfilled because there’s no demand?

Taste vs. gap confusion: What feels like “missing music” might just be niche with no audience. Gapfiller philosophy could justify creating music nobody wants.

“Timeless music” claim without evidence: “This is how you create timeless music” - But none of Batushi’s songs are cited as timeless or widely recognized. The claim is aspirational, not proven.

Mixtape history: The evolution from mixtapes → rap production → trance → R&B suggests genre-hopping, which conflicts with Greenwood’s “genre consistency” advice for algorithmic success.

Comparison to Other Frameworks:

Southworth: No artistic philosophy, pure marketing optimization

Greenwood: “Fill gaps” not mentioned; focus on executing singles economy

Batushi: Gapfiller is artistic foundation for both creation and curation

This is Batushi’s attempt to preserve artistic integrity while executing commercial strategy. The gapfiller philosophy lets him say “I’m not selling out, I’m completing the musical landscape.”

Chapter 2: The Curator First Mindset

Core Claim: Positioning yourself as curator rather than artist changes power dynamics from supplicant (asking favor) to authority (offering value).

The Shift:

Struggling Artist: “Please listen to my song”

Successful Curator: “I’ve created an experience for you”

Three-Part Framework:

Your playlist is the product

Primary focus: growing playlist

Your music: “vital ingredient” but not “main dish”

Reframe: Playlist is what you serve to world, music is component

You become the authority

Curator = trusted guide

Organic discovery: “When they discover your own song sprinkled within, it’s an organic discovery, not a promotion. It feels like finding a hidden gem.”

Psychological difference: Discovery (listener agency) vs. Promotion (artist pushiness)

Credibility becomes your currency

Zix Music deal happened because “my playlist’s success was proof that I understand how to connect music with an audience”

Playlist success = social proof for music quality

Authority transfer: Good curator → probably makes good music

The Permission Structure:

Traditional artist approach:

Artist → Fan (direct pitch, high resistance)

Curator approach:

Curator → Fan (value provision, trust earned) → Music discovery (indirect, low resistance)

Logical Strength: This reframes artist-fan relationship from transactional (listen to me) to service-based (I serve your mood needs). Permission marketing theory: earn attention, don’t demand it.

Gaps/Assumptions:

8 of 100 songs = 8% self-promotion: Is this the optimal ratio? Batushi doesn’t test alternatives (5%, 10%, 15%). The 8% may be arbitrary.

“Organic discovery” may be strategic deception: Listeners think they’re discovering hidden gem, but artist deliberately placed it there. Is this authentic curation or manipulation disguised as curation?

Credibility transfer assumption: Does good curator = good artist? Not necessarily. Film critics aren’t filmmakers. DJs aren’t producers. Batushi assumes curatorial skill proves artistic skill, but these are separate competencies.

Scale ceiling: 2,500 playlist followers gave him label remix gig. But this isn’t sustainable career—it’s one commission. Where’s the recurring revenue?

Comparison to editorial playlist curators: Spotify’s editorial curators don’t include their own music in playlists (conflict of interest). Batushi’s model is inherently self-promotional while claiming to be “value-first.”

Ethical Tension: The curator-first mindset is strategic framing that obscures self-promotion as value provision. Listeners follow playlist for the experience, unaware they’re being funneled toward artist’s own music. This isn’t dishonest (music is clearly labeled with artist name), but it’s not as “organic” as Batushi claims.

Chapter 3: The Anatomy of a Mood

Core Claim: Successful playlists serve single, specific purpose. Precision in mood definition enables ruthless focus in song selection, building listener trust through consistent delivery.

Playlist Foundation (Three Questions):

The Mood (Be Specific):

❌ “Happy” (too broad)

✅ “Uplifting energetic windows down summer drive” (precise)

The Listener (Demographics + Psychographics):

Gen Z student studying?

Millennial getting ready to go out?

Gen X unwinding after work?

The Context (When/Where):

Dictates energy flow, tempo, lyrical content

Workout vs. Focus vs. Party = different sonic parameters

Selection Criteria: “Does this track serve the mood? Does it fit the context? If the answer is anything but a resounding yes, it doesn’t make the cut.”

Trust Mechanism: “Listeners follow Mood Lifting Happy Songs because they know it will deliver on its promise every single time. They don’t have to think. They just have to feel.”

Playlist as Utility: “Your playlist becomes a reliable utility in their emotional life.”

Logical Strength: This is brand positioning 101 applied to playlists. Narrow focus → clear promise → reliable delivery → trust → loyalty. The specificity prevents drift and creates consistency.

Gaps/Assumptions:

“Mood Lifting Happy Songs” is still quite broad: What counts as “happy”? Upbeat pop? Indie folk? Acoustic singer-songwriter? The name suggests specificity but allows wide latitude.

No methodology for mood definition: How does artist know what mood to target? Should they choose based on personal preference, market gap analysis, competition research? Not addressed.

Listener personas are guesses: Gen Z studying vs. Millennial partying - these require different playlists. Batushi’s single playlist can’t serve both. Who is his actual target listener?

Context dictates contradictions: “Windows down summer drive” requires upbeat, energetic music. But “happy songs” could include slower, contemplative tracks. How do you resolve mood conflicts?

Ruthless focus may limit discoverability: If playlist is too narrow, Spotify’s algorithm may struggle to categorize it or recommend it. Generic mood playlists (Chill, Happy, Workout) have algorithmic advantages.

Example Missing: Batushi doesn’t show song selection process in action. “Does this serve the mood?” is useful question, but without examples of yes/no decisions, readers can’t calibrate their own judgment.

Chapter 4: The Golden Positions

Core Claim: Playlist position dramatically affects song performance due to predictable listener behavior patterns. Strategic placement of your 8 songs within 100-song playlist maximizes streams, saves, and discovery.

The Skip Rate Reality: “The first position has the highest skip rate. Places are settling in and are quick to judge.”

The Eight-Point Formula (100-song playlist):

Positions 2 & 4 - The Introduction:

Listener is now engaged (settled in)

Prime discovery slots

High attention, low skip rates

Use: Strong, catchy tracks that perfectly represent playlist’s core mood

Positions 6 & 9 - The Reinforcement:

Trust earned

Solidify the mood

Track feels like “core part of playlist’s identity”

Positions 20 & 28 - The Mid-Journey Reward:

Listener is deep into experience

Track feels like “familiar friend”

“Oh, I love this one” effect

High save rate zone

Position 48 - The Second Wind:

Deep enough to feel like fresh discovery

Re-engages listener starting to fade

Attention reset point

Position 85 - The Lasting Impression:

“Power move” - one of last things they hear

“Leaving your melody in their head long after the playlist finishes”

Recency bias exploitation

Listener Psychology Model:

Early tracks (1-10): High skip rate (listeners judging, settling in)

Middle tracks (20-50): High save rate (trust established, actively engaged)

Late tracks (80-100): Attention waning but recency bias for final impressions

Logical Strength: The psychological arc makes intuitive sense. People settle into playlists, pay most attention in middle section, and remember endings. Batushi is applying narrative structure (introduction, development, climax, resolution) to playlist flow.

Critical Gaps:

“After analyzing thousands of streams and listener behaviors”:

What data sources? Spotify for Artists only shows your own music’s performance.

Can’t see skip rates by position directly in Spotify analytics.

How did he isolate position effect from song quality effect?

Sample size: If he has 8 songs in playlist, he can test 8 positions. How did he “analyze thousands”? Multiple playlists? Over years? Methodology completely unclear.

No comparison to control positions:

What happens if you place your song at Position 1 vs. Position 2? Position 50 vs. Position 48?

Without A/B testing data, the “golden positions” might be arbitrary

Correlation vs. causation: Maybe his songs at Position 2 & 4 perform well because they’re his best songs, not because of position

Confounding variables:

Song placement ≠ only factor affecting performance

Quality, familiarity, mood fit, tempo flow from previous track all matter

Batushi doesn’t control for these

“Proven formula” claim is overreach:

Proven = validated across multiple trials with controls

Batushi provides anecdotal pattern from his own playlist

This is hypothesis, not proof

Playlist length assumption:

100-song playlist is specific to his strategy

What if playlist is 50 songs? 200 songs? Does formula scale?

Position 85 in 100-song playlist = 85% through

Position 85 in 200-song playlist = 42.5% through (very different psychological context)

Alternative Explanation (Not Considered):

Maybe any consistent placement strategy works, and the specifics don’t matter. If you rotate songs regularly and track data, you’ll naturally optimize over time. The “golden positions” might be placebo—the real benefit is systematic testing and data review, not the positions themselves.

Comparison to Southworth/Greenwood:

Neither Southworth nor Greenwood discusses playlist position optimization. They focus on getting onto playlists (editorial or algorithmic), not optimizing performance within playlists. Batushi is addressing different problem: given that your song is on a playlist (yours), how do you maximize its performance?

Chapter 5: The Art of Rotation and Testing

Core Claim: Playlists must evolve through data-driven rotation. The “Top 8 + 2 Challengers” system ensures your strongest songs stay active while testing new material, removing ego from curatorial decisions.

The Rotation System:

Current State: 100 songs total (8 yours, 92 from other artists)

Why 8/92 ratio?: “This is crucial for credibility” - If playlist is 50% your music, it’s self-promotional. If it’s 8%, it’s curatorial with strategic self-inclusion.

The Process:

Step 1 - Identify Your Top 8:

Use Spotify for Artists data

Metrics: Streams, saves, audience retention

These become “anchor tracks”

Step 2 - Introduce 2 Challengers:

Add 2 new or underperforming songs

Place in test positions (example: 15 and 60)

Note: These aren’t “golden positions” (2, 4, 6, 9, 20, 28, 48, 85)

Contradiction: If golden positions are proven, why test in non-golden positions?

Step 3 - Let Data Decide:

Wait 2 weeks to 1 month

Analyze: Did challengers perform well? Did either outperform anchor tracks?

Step 4 - Rotate and Repeat:

If challenger proves itself → replaces lowest-performing anchor

Retired anchor exits playlist

New challenger enters

Continuous optimization loop

The Philosophy: “This data-driven process ensures your playlist is always populated with your strongest material. It removes ego and emotion from the equation. You are not guessing what listeners want. You are letting their actions tell you directly.”

Logical Strength: The rotation system is meritocratic. Songs earn their positions through performance, not through artist attachment. This prevents stagnation and forces continuous improvement.

Critical Gaps:

Golden Positions vs. Test Positions Contradiction:

Chapter 4: Positions 2, 4, 6, 9, 20, 28, 48, 85 are “golden” (optimal performance)

Chapter 5: Test new songs at positions 15 and 60 (not golden)

Logic flaw: If you’re testing songs in non-optimal positions, you’re handicapping them. They’ll underperform not because of quality but because of position.

Better system: Test challengers in golden positions against current anchors in those positions

Anchor track retirement is permanent:

“The anchor track is retired for now”

But: Song quality doesn’t decay. If a song performed well for months, then gets beat by challenger, maybe it was just listener fatigue (they’ve heard it too many times in this playlist).

Solution not considered: Rotate anchor tracks out temporarily, bring back later when they feel fresh again

No discussion of playlist position changes for anchor tracks:

If you have 8 anchor tracks in 8 golden positions, and 2 challengers in test positions, that’s 10 of your songs in playlist.

But Chapter 4 says 8 of 100 are yours.

Math doesn’t work. Either:

Anchor tracks don’t all occupy golden positions, OR

Challengers replace other artists’ songs (not your anchor tracks), OR

The system wasn’t fully thought through

Time horizon for “proven”:

2 weeks to 1 month for testing

But: Seasonal songs, cultural moments, algorithm changes could affect performance

Song that bombs in January might thrive in June

No discussion of temporal factors

What counts as “outperform”?:

Streams per listener? Save rate? Total streams? Skip rate?

Batushi says “streams, saves, and audience retention” but doesn’t weight them

If Song A has higher streams but lower saves than Song B, which wins?

The “Ego Removal” Claim:

“It removes ego and emotion from the equation.”

This sounds objective, but all data interpretation requires judgment:

Which metrics matter most?

How long do you test before deciding?

Do you account for confounding variables (position, recency, seasonality)?

Data doesn’t eliminate ego; it just shifts ego to data interpretation.

Comparison to Scientific Method:

Batushi’s rotation system resembles A/B testing, but lacks:

Control groups (what if you didn’t rotate at all?)

Sample size (testing 2 songs at a time = small sample)

Isolation of variables (position, quality, mood fit all conflated)

Statistical significance thresholds (how much better must challenger perform to replace anchor?)

It’s data-informed, not data-driven. There’s still substantial artistic judgment involved.

Chapter 6: The Data Doesn’t Lie

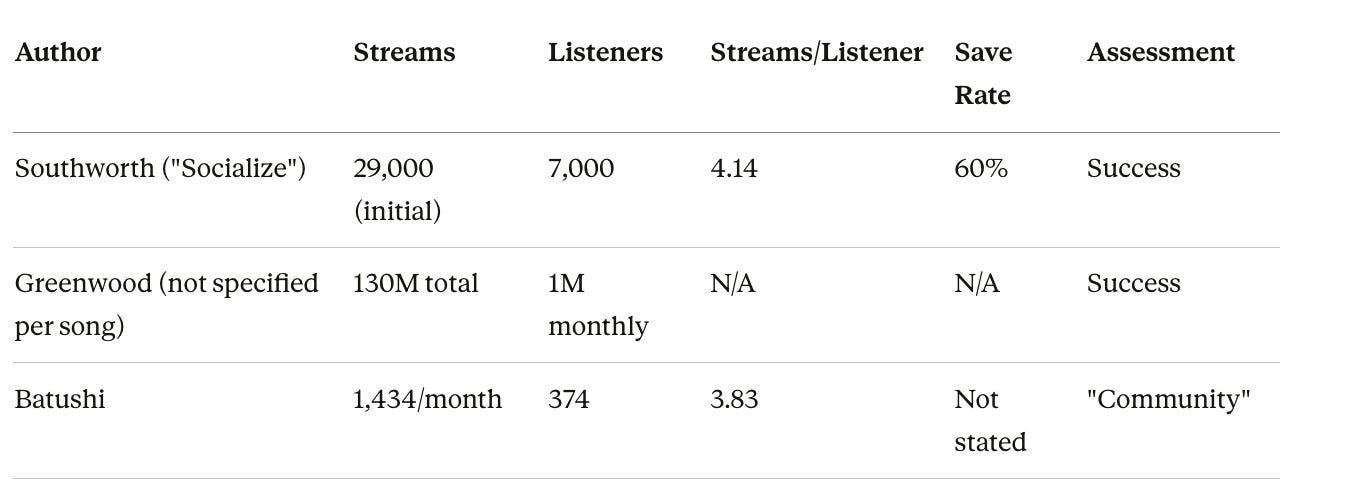

Core Claim: Streams per listener (depth of engagement) is more valuable than monthly listeners (breadth of reach). 374 highly engaged listeners streaming 3.83 times each > 10,000 listeners streaming once each.

The Metric That Matters:

Not this: “10,000 monthly listeners who each stream one song one time is not a fan base. It’s a puddle.”

This: “Having 374 listeners who stream your music a combined 1,434 times, an average of 3.83 streams each, is a community. It’s the beginning of a real, dedicated audience.”

Math Check:

374 listeners × 3.83 streams/listener = 1,432.42 streams

Batushi claims 1,434 streams

Math confirms (within rounding)

Why Streams Per Listener Matters:

Indicates genuine interest, not accidental exposure

High repeat rate = saves, playlist adds, follows

Signals quality to Spotify’s algorithm

“Super listeners” become customers (merch, tickets, crowdfunding)

How Playbook Strategies Optimize This Metric:

Gapfiller philosophy: Creates music people feel is missing → encourages repeat listens

Curator-first mindset: Builds trust → listeners more receptive to artist’s music

Golden positions: Songs heard in right psychological moment → higher save rates

Rotation system: Best material always front and center

The Goal: “The goal isn’t just to be heard, it’s to be heard again.”

Logical Strength: This reorients success measurement from vanity metrics (total listeners) to engagement metrics (depth per listener). Aligns with Southworth’s emphasis on save rate and repeat listen rate.

Gaps/Assumptions:

374 listeners is tiny sample:

Batushi frames this as “community,” but it’s 0.0002% of Spotify’s 165M users (Greenwood’s stat)

This isn’t sustainable income. At 1,434 streams/month × $0.003/stream = $4.30/month = $51.60/year

Even at 20 songs generating same rate = $1,032/year

This doesn’t approach Greenwood’s $108K or Southworth’s profitability

Depth vs. breadth is false dichotomy:

Batushi implies you must choose between 10,000 shallow listeners and 374 deep listeners

Reality: You want both - large audience with high engagement

Southworth’s case study: 7,000 listeners with 60% save rate, 3x repeat rate

That’s 7,000 deep listeners, not 374

3.83 streams per listener is actually quite low:

Southworth targets 3-4x repeat listen rate (streams per listener)

3.83 is barely above Southworth’s minimum threshold

Batushi frames this as success, but by Southworth’s standards, it’s mediocre

“The data doesn’t lie” but interpretation can:

Batushi’s data: 374 listeners, 1,434 streams, 3.83 average

His interpretation: “Community,” “real dedicated audience,” “super listeners”

Alternative interpretation: Small, poorly-monetized audience that hasn’t grown meaningfully

The data is factual. The framing is optimistic.

No benchmarking against industry standards:

Is 3.83 streams/listener good? Compared to what?

Southworth’s “Socialize” had 60% save rate and 3x repeat rate

Greenwood’s songs maintain position on playlists due to low skip rates

Where does Batushi’s 3.83 rank?

Comparison to Southworth/Greenwood:

Batushi’s metrics are orders of magnitude smaller. He’s reframing modest results as strategic success.

Chapter 7: Fueling the Fire - Smart Promotion with SubmitHub

Core Claim: Modest promotional budget ($300/month) focused on playlist growth—not individual song promotion—drives sustainable music discovery through curator ecosystem.

Budget Allocation ($300/month total):

SubmitHub Ads (~$200/month?):

Target: Listeners of specific genres and moods aligning with “Mood Lifting Happy Songs”

CTA: “Follow playlist” (never “stream song”)

Strategy: Promote experience, not product

Instagram Ads ($50/month):

Retarget people who engaged with content

Creative: High-contrast text-based videos explaining curation secrets + link to playlist

Audience: Warm traffic (already aware)

The Rest (~$50/month):

TikTok content boosting

Essential tools (Canva)

Why SubmitHub is Key (Three Benefits):

Forces you to listen: Reviewing submissions sharpens curatorial skills, discovers new music for playlist freshness

Builds network: Connect with artists and labels

Provides credibility: Being approved curator = badge of authority reinforcing brand

The Strategy: “Use your budget to buy targeted attention for your playlist. The growth of your own music will be the natural, inevitable result.”

Logical Strength: The curator ecosystem strategy is elegant. Instead of competing with thousands of artists promoting songs, you’re building infrastructure (playlist + curator reputation) that pulls listeners to you. SubmitHub double-dips: you pay to promote your playlist while also getting paid (in credits or reputation) to curate submissions.

Critical Gaps:

$300/month budget is modest—maybe too modest:

Southworth: $300 total for successful campaign

Greenwood: $500-600/month minimum to trigger algorithm

Batushi: $300/month ongoing

If Greenwood’s threshold ($500-600) is accurate, Batushi’s budget is below algorithmic trigger point

His results (374 listeners) suggest he’s not triggering major algorithmic placement

“Natural, inevitable result” is unproven:

Batushi claims playlist growth inevitably causes music discovery

But: 2,500 playlist followers could listen to playlist without ever engaging with his 8 songs (out of 100)

Conversion rate not provided: What % of playlist followers actually stream/save his music?

If it’s 15% (2,500 × 0.15 = 375), that matches his 374 listener count, suggesting ~15% conversion

Is 15% “inevitable” or just correlation?

SubmitHub economics unclear:

How much does SubmitHub charge for playlist promotion ads?

How many playlist followers can you gain with $200/month?

Batushi doesn’t provide conversion data: $ spent → playlist followers → music streams

Instagram ad strategy is vague:

“High-contrast text-based videos explaining curation secret”

What secrets? How long are videos? What’s the hook?

“Retarget people who engaged with my content” - What content? How much engagement required?

No performance metrics: CTR, cost per follower, conversion rate

Becoming curator on SubmitHub:

Batushi says this builds network and credibility

But: Doesn’t explain how to become approved curator

Doesn’t discuss costs (SubmitHub curators must maintain quality standards, which requires time)

“Reviewing submissions sharpens curatorial skills” - How many submissions/month? Time investment?

No comparative analysis:

Would $300/month on Facebook ads (Southworth method) drive more streams than $300 on playlist promotion?

Batushi doesn’t test alternatives

He’s describing his approach, not proving it’s optimal

The Philosophical Divide:

Southworth/Greenwood: Direct music promotion (ads → song streams)

Batushi: Indirect music promotion (ads → playlist followers → organic discovery of songs within playlist)

Batushi’s approach has advantages:

Builds long-term asset (playlist)

Lower per-stream cost (organic discovery within playlist)

Avoids “desperate artist” stigma

Disadvantages:

Slower growth (playlist must grow first, then music follows)

Conversion uncertainty (playlist follower ≠ music listener)

Requires more complexity (maintain playlist quality, rotate songs, balance self-promotion with credibility)

SubmitHub as Double-Sided Marketplace:

Batushi is both:

Curator (reviewing submissions, building playlist)

Promoter (running ads to grow playlist)

This creates flywheel:

Run ads → Playlist followers grow

Review submissions → Playlist quality improves

Better playlist → More followers

More followers → More submission interest

More submissions → Better song selection

Better songs → Stronger playlist → More followers

Your songs embedded = passive discovery

If this flywheel works, it’s powerful. But Batushi’s results (374 listeners) suggest the flywheel is spinning slowly.

Conclusion: You Are the Curator Now

Summary of Key Principles:

Stop begging for seat at someone else’s table. Build your own.

Fill gaps in musical landscape

Become the trusted guide

Obsess over data

Serve your audience with perfectly crafted mood

Results Claimed:

Built career

Secured label deal

Finally got music heard

The Mindset Shift: “Stop being a struggling artist. Start being the successful curator.”

Call to Action: “Now go build your playlist.”

Gaps in Conclusion:

“Secured a label deal”:

Earlier: “Zix Music commissioned me for a remix”

Now: “Secured label deal”

These are different things. Remix commission = freelance work. Label deal = recording contract.

Which is true? Or is Batushi conflating them?

“Built career” vs. actual metrics:

374 monthly listeners

1,434 streams/month = ~$4.30/month in royalties

This isn’t sustainable income

“Built career” is aspirational framing, not economic reality

“Strategies are not theoretical”:

Batushi claims these are “result of years of trial, error, and data analysis”

But: No aggregate data provided across multiple songs/playlists

It’s one playlist, one artist’s experience

That’s case study, not proof of transferable system

Missing chapters:

Book has 7 short chapters (~3,000 words total)

No discussion of: song selection for playlist (other artists’ music), legal issues (using others’ songs), growth tactics beyond ads (SEO, social media, collaborations), monetization (sponsorships, affiliate deals)

Compared to Southworth (20,000+ words) and Greenwood (30,000+ words), this is pamphlet, not book

Bridge Section: Synthesizing Batushi’s Logical Architecture

The Core Argument Chain

Problem: Musicians struggle despite good music because they think like artists (product-focused) rather than distributors (value-focused).

Solution: Become curator. Build playlist as primary product. Embed your music within playlist ecosystem.

Mechanism:

Define gap you’re filling (mood, context, listener)

Build playlist with ruthless focus on delivering that mood

Place your 8 songs in golden positions (2, 4, 6, 9, 20, 28, 48, 85) within 100-song playlist

Rotate based on data (Top 8 + 2 Challengers system)

Promote playlist (not songs) via SubmitHub ads + Instagram ads ($300/month)

Track streams per listener (optimize for depth, not breadth)

Result: Organic discovery of your music by engaged listeners who trust your curation.

Internal Consistency Analysis

Coherent Elements:

Mindset shift is genuine insight: Artist → Curator reframe changes power dynamic

Playlist as Trojan horse: Hide self-promotion inside value provision

Data emphasis: Track performance, rotate based on results

Depth over breadth: Better to have 374 engaged listeners than 10,000 passive

Internal Contradictions:

Golden Positions vs. Test Positions:

Chapter 4: These 8 positions are proven optimal

Chapter 5: Test new songs in positions 15 and 60

If 15 and 60 aren’t optimal, you’re sabotaging your tests

8 songs vs. 10 songs in playlist:

States: 8 of 100 are mine

Also states: 8 anchor + 2 challengers = 10 total

Math error, or anchor tracks don’t all occupy golden positions?

“Organic discovery” vs. strategic placement:

Batushi frames discovery as organic (listener finds hidden gem)

But: He strategically places his songs in psychologically optimal positions

This isn’t organic; it’s engineered to feel organic

Credibility requires restraint vs. profit requires promotion:

To maintain credibility: Keep self-promotion to 8% of playlist

To maximize profit: Include more of your songs

Tension: As artist grows catalog, do they create second playlist? Or do they violate 8% rule?

Budget inadequacy:

$300/month is below Greenwood’s $500-600 threshold for triggering Spotify algorithm

Batushi’s results (374 listeners) confirm he’s not triggering major algorithmic placement

So: Either algorithm triggers aren’t real (Greenwood/Southworth wrong), OR Batushi’s budget is too small (his strategy underresourced)

What Batushi Gets Right

The Curator Reframe: This is genuinely novel approach compared to Southworth and Greenwood. Instead of promoting music directly, build distribution channel (playlist) and embed music within. It’s permission marketing applied to streaming: earn attention through value, then convert attention to streams.

Psychological Positioning: Understanding that discovery feels different than promotion is sophisticated. “Hidden gem” effect is real—people value things they feel they found vs. things pushed at them. Batushi exploits this.

Streams Per Listener Focus: Batushi and Southworth agree: depth matters more than breadth. 3.83 streams/listener isn’t amazing, but it’s better than 1.0 streams/listener (pure passive exposure).

Modest Budget Realism: Unlike Greenwood’s $500-600/month or Southworth’s focus on triggering algorithms, Batushi describes approach for artists with $300/month. This is more accessible (though still not free).

What Requires Extreme Skepticism

“Proven” Formula Without Proof:

Golden positions based on “analyzing thousands of streams”

No methodology, no sample size, no controls, no peer review

Positions 2, 4, 6, 9, 20, 28, 48, 85 may be arbitrary

Pattern recognition in noise ≠ proven system

Results Don’t Match Claims:

“Built career” = 374 monthly listeners

“Secured label deal” = remix commission (not recurring deal)

“Finally got my music heard” = 1,434 streams/month

These are improvements over zero, not professional success

Sample Size of One:

All evidence from Batushi’s single playlist

No data from students, clients, or other curators using system

No validation that strategies transfer

Economic Unsustainability:

374 listeners × 3.83 streams × $0.003/stream = $4.30/month

Even with 20 songs at same rate = $86/month

Spending $300/month to earn $86/month = -70% ROI

This isn’t profitable unless treating as long-term investment that eventually scales

But: 2,500 playlist followers converting at 15% = 375 listeners. Growth has plateaued.

Causation Claims Unverifiable:

“Strategically placed within that ecosystem found its audience organically”

Maybe playlist helped. Or maybe his music just improved after 150 failed songs.

No way to isolate playlist effect from music quality improvement

Comparison to Southworth and Greenwood

Key Insight:

Batushi is solving different problem than Southworth/Greenwood:

They address: “How to promote finished music to audiences?”

He addresses: “How to build music career with no existing audience and modest budget?”

But his solution (playlist curation) doesn’t actually solve that problem at scale. 374 listeners isn’t career. It’s hobby.

The Unexamined Assumptions

1. Playlist Followers Convert to Music Listeners:

Batushi assumes: Playlist follower → Hears your songs in playlist → Streams/saves your music

But conversion rate is critical unknown:

If 2,500 followers × 15% = 375 listeners (matches his 374)

That’s total conversion, not recurring

As playlist grows to 5,000 followers, does conversion rate hold? Or does it decrease (dilution)?

No data provided

2. Golden Positions Actually Matter:

Batushi’s entire system depends on positions 2, 4, 6, 9, 20, 28, 48, 85 being superior to other positions. But:

No controlled testing provided

Listener behavior varies by platform, context, playlist length

Spotify may shuffle playlists algorithmically (some users see different orders)

Position effects likely exist, but claiming specific positions are “golden” without A/B tests is speculation

3. 8% Self-Promotion is Optimal Ratio:

8 of 100 songs (8%) = credibility threshold?

But:

Why not 5%? Or 10%?

Does it vary by playlist size, follower count, genre?

Batushi never tested alternatives

The ratio may be arbitrary

4. Indirect Promotion Outperforms Direct Promotion:

Batushi’s thesis: Promoting playlist > promoting songs

But:

Southworth’s direct promotion (ads → songs): 7,000 listeners, 29K streams

Batushi’s indirect promotion (ads → playlist → songs): 374 listeners, 1,434 streams

By results, direct wins by ~20x

Maybe indirect promotion works at scale (100K+ playlist followers), but at 2,500 followers, it’s inefficient.

5. “Organic Discovery” = Better Quality Listeners:

Batushi implies: Listeners who find your music in playlist (organic) > listeners from ads (paid)

Possible reasoning:

Organic discovery = self-selected, intentional

Ad-driven listening = purchased, potentially passive

But:

Southworth’s data shows ad-driven listeners save at 60% (highly engaged)

Greenwood’s ad listeners drive algorithmic placement

“Organic” doesn’t automatically mean higher quality

6. Curator Credibility Transfers to Artist Credibility:

Batushi claims: Good curator → People assume good artist

But:

Film critics ≠ filmmakers

Restaurant critics ≠ chefs

Playlist curators ≠ music producers

Curatorial skill and creative skill are orthogonal. Batushi’s playlist success proves he understands mood/flow/selection. It doesn’t prove his songs are good. Listeners might follow his playlist but skip his songs.

What the Book Reveals (and Conceals)

What It Reveals:

Curator approach is viable micro-strategy: For artists with no budget, building playlist and embedding music is zero-cost tactic

Playlist positioning may affect performance: Even if “golden positions” aren’t proven, playlist position probably matters somewhat

Streams per listener is important metric: Depth of engagement > breadth of reach (aligns with Southworth)

Authority positioning changes power dynamics: “I have experience to offer” > “Please listen to me”

What It Conceals:

Economic unsustainability: 374 listeners earning $4-5/month isn’t career

Scale ceiling: 2,500 playlist followers after “years of trial and error” is modest growth

Opportunity cost: Time spent curating playlist = time not spent making music, touring, networking

Selection bias: Batushi may have succeeded despite curator strategy, not because of it. Maybe his music finally improved after 150 songs.

Label deal inflation: Remix commission ≠ record deal, but conclusion conflates them

The Critical Question: Does This System Work?

Evidence For:

Batushi improved from ~0 listeners to 374 (growth exists)

3.83 streams per listener shows some engagement

Zix Music recognized value (commissioned remix)

Playlist grew from 957 to 2,500 followers (growth exists)

Evidence Against:

374 listeners after years of effort is not sustainable career

$300/month spend for $4-5/month return = massively unprofitable

No secondary validation (students, other curators, replication)

Results are orders of magnitude smaller than Southworth/Greenwood

“Golden positions” lack methodological rigor

Conversion rates, ROI, and growth trajectory undefined

Verdict: The curator strategy may work as part of broader approach, but as standalone system, it’s underperforming. Batushi’s results suggest:

The strategy builds foundation (playlist followers, curator credibility)

But doesn’t scale to professional income

Requires supplement with direct promotion (ads), collaborations, or other revenue streams

Alternative Hypothesis:

Maybe Batushi’s music just isn’t connecting at scale. The curator strategy gave him some audience (better than zero), but the plateau at 374 listeners suggests fundamental ceiling—either music quality, genre fit, or promotional intensity.

If curator strategy was truly powerful:

Playlist should have 10K-50K+ followers (many mood playlists reach this)

Conversion should yield 1,500-7,500 listeners (15% of 10K-50K)

That would approach sustainable income

At 2,500 followers, he’s stuck in hobby-tier.

Part 2: Full Literary Review Essay

Three hundred seventy-four listeners. That’s the number Beckham Batushi frames as “community,” as “real, dedicated audience,” as proof that his curator-first strategy works. These 374 people stream his music an average of 3.83 times each, generating roughly 1,434 streams per month—about $4.30 in monthly royalties at standard rates. Batushi calls this success. He’s built a career, secured a label deal, finally gotten his music heard. But the mathematics tell a different story, one about the gulf between strategic innovation and economic sustainability, between clever positioning and actual scale, between feeling like you’ve cracked the code and actually building a business that can pay rent.

The Curator’s Playbook proposes a fundamental reframe: stop thinking like an artist begging for attention, start thinking like a curator offering value. Build a playlist—Batushi’s “Mood Lifting Happy Songs”—and grow it from 957 to 2,500 followers by promoting the experience rather than individual songs. Embed your own music within the playlist (8 tracks out of 100, an 8% self-promotion ratio) in what Batushi calls “golden positions”—slots 2, 4, 6, 9, 20, 28, 48, and 85—where listener psychology supposedly maximizes engagement. Spend $300 monthly promoting the playlist via SubmitHub ads and Instagram retargeting. Rotate tracks based on performance data using a “Top 8 + 2 Challengers” system where underperforming songs get replaced by better-performing ones. The result, Batushi claims, is organic discovery of your music by listeners who already trust your curatorial judgment. Your music becomes the hidden gem they find rather than the advertisement they resist.

The philosophical foundation is his “Gapfiller” concept—create music and playlists that fill emotional holes in the landscape rather than competing for existing attention. “Even with all the music in the world, some songs are still missing,” he writes. “My motivation isn’t to follow trends. It’s to fill those gaps.” This positions the artist as completing a puzzle rather than fighting for space in a crowded market. As creative philosophy, it’s appealing. The shift from competition to completion is psychologically liberating and potentially artistically generative. But as economic strategy, it’s untethered from demand. What feels like a gap to you—a specific summer vibe, an introspective thought without its perfect soundtrack—might be a gap because there’s no market for it. The Gapfiller philosophy could justify creating music nobody wants, then explaining commercial failure as the market’s inability to recognize what was missing. Batushi doesn’t address this risk, treating gap identification as creative instinct rather than market research.

The curator-first mindset is where Batushi’s insight genuinely diverges from Andrew Southworth and Chris Greenwood. Both of them treat music as product requiring direct promotion—ads that drive traffic to songs, relationships that place songs on playlists, algorithms triggered by song-level metrics. Batushi inverts this: the playlist is the product, the music is ingredient. You promote the playlist, and music discovery happens as byproduct. The psychological mechanism is sound. When listener follows “Mood Lifting Happy Songs” and encounters Batushi’s track at position 20, they’re in trusted environment, having already accepted several songs. The track feels like part of curated experience rather than interruption. Compare this to Spotify ad that plays between tracks—interruption model—or Facebook ad in news feed—attention theft model. Batushi’s approach is invitation model: listener chose the playlist, and the music comes as part of package they wanted.

But the evidence that this strategy outperforms alternatives is absent. Southworth drove 7,000 listeners with $300 in Facebook ads. Batushi reached 374 listeners with years of effort and ongoing $300 monthly spend. If the goal is building audience, Southworth’s direct approach outperforms by roughly 20x. If the goal is building “deeper” engagement, Southworth’s 3x repeat listen rate (streams per listener) approximately equals Batushi’s 3.83x. Batushi’s advantage—if there is one—must be sustainability or scalability. Maybe playlist followers compound over years while ad-driven listeners churn. But Batushi provides no longitudinal data. We don’t know if his 374 listeners are stable, growing, or declining. We don’t know how long it took to reach 2,500 playlist followers or whether growth has plateaued.

The “golden positions” chapter presents the playbook’s technical core, and it’s here that Batushi’s claims outrun his evidence most dramatically. After allegedly analyzing “thousands of streams and listener behaviors,” he identifies eight optimal positions within 100-song playlist: slots 2, 4, 6, 9, 20, 28, 48, and 85. The reasoning is psychological—position 1 has high skip rate (listeners settling in), middle positions benefit from established trust, late positions exploit recency bias. This is plausible as general principle. Narrative structure theory suggests beginnings and endings matter, middles sag. Applying this to playlist flow makes intuitive sense.

But “proven formula” requires proof, and Batushi provides none. How did he analyze “thousands of streams” when he can only test 8 songs at a time in his single playlist? Over how many years? Did he control for song quality—ensuring the same song tested in multiple positions to isolate position effect? Did he account for temporal factors—songs perform differently in January vs. July, during workout vs. study sessions? Spotify for Artists dashboard doesn’t show skip rates by playlist position. How did he measure position-specific performance? The most likely scenario: Batushi placed his songs in these positions, they performed reasonably well, and he reverse-engineered a theory that these positions are “golden.” This is pattern recognition, not controlled experimentation. The positions may be optimal, or they may be arbitrary, or they may be optimal for his playlist but not generalizable to others.

The rotation system—Top 8 anchors + 2 challengers tested, data decides winners—is methodologically sounder than the golden positions claim. This is A/B testing: keep your best material active, test new material in comparison, replace underperformers with overperformers. The system “removes ego and emotion,” Batushi says, letting listener actions dictate playlist composition. But the implementation has logical flaw: if you test challengers in positions 15 and 60 (his examples) while anchors occupy the golden positions (2, 4, 6, 9, 20, 28, 48, 85), you’re not running fair test. You’re handicapping challengers by placing them in suboptimal positions. For the rotation system to work as described, challengers should be tested in golden positions against current anchor tracks in those positions. Otherwise, you’re comparing songs in optimal context (anchors) to songs in suboptimal context (challengers), and anchors will always win regardless of actual quality.

The budget chapter reveals the economic constraints that may explain Batushi’s modest results. Total promotional spend: $300 per month, split across SubmitHub ads (~$200?), Instagram ads ($50), and TikTok/tools ($50). Compare this to Southworth’s $300 total for successful campaign or Greenwood’s $500-600 monthly minimum to trigger Spotify’s algorithm. If Greenwood’s threshold is accurate, Batushi is systematically underfunding his promotion. His strategy may work conceptually, but it’s underresourced. The curator approach could succeed at $1,000-2,000 monthly spend focused on playlist growth, but at $300 monthly, you’re below the algorithmic trigger point. This explains why his playlist grew to 2,500 followers and stalled—not enough promotional intensity to break through to exponential growth.

The SubmitHub integration is the playbook’s cleverest tactical element. By becoming curator on SubmitHub—reviewing other artists’ submissions—Batushi gains three benefits: sharpened curatorial skills (forced listening), network expansion (connections with artists and labels), and credibility (approved curator badge). He’s simultaneously promoting his playlist through SubmitHub ads while building authority as SubmitHub curator. This creates flywheel: more credibility → more submissions → better song selection → stronger playlist → more followers → more credibility. If this flywheel spins fast enough, it could generate exponential growth. But Batushi’s results suggest it’s spinning slowly. 2,500 playlist followers after “years of trial, error, and data analysis” isn’t failure, but it’s not the breakthrough the introduction promises.

The conclusion conflates remix commission with “label deal,” and this linguistic slippage reveals broader pattern in the playbook. Batushi frames modest improvements as transformational success. Growing from 0 to 374 listeners is real progress, but it’s not “building a career” in any professional sense. Getting commissioned for one remix by Zix Music is validation, but it’s not “securing a label deal” implying ongoing relationship and financial support. The entire playbook operates in this optimistic framing zone where incremental improvement gets narrated as systemic breakthrough. This isn’t dishonest—Batushi experienced these as breakthroughs relative to his previous struggles—but it’s misleading for readers who might interpret “built career” as “achieved sustainable income from music.”

Comparing Batushi to Southworth and Greenwood exposes the playbook’s fundamental limitation: it’s micro-strategy for artists willing to accept micro-results. Southworth and Greenwood describe paths to thousands of listeners, hundreds of thousands of streams, eventually profitable operations. Their strategies require more capital ($500-5,000 per song) and more sophistication (ad platform mastery, relationship building, multi-channel integration), but they scale. Batushi describes path to hundreds of listeners and thousands of streams. His strategy requires less capital ($300/month) and different skills (curation, patience, data analysis), but it doesn’t scale—or at least, he hasn’t shown that it does. Maybe the curator approach hits inflection point at 10,000 playlist followers, where conversion rates and algorithmic pickup create exponential growth. But Batushi stopped at 2,500, and without data from others who’ve pushed past that threshold, we’re left guessing whether the strategy has ceiling or just needs more time.

The ethical dimension Batushi doesn’t examine is whether curator-first approach is authentic value provision or sophisticated self-promotion disguised as service. He positions himself as trusted guide building experience for listeners, but the experience is engineered to funnel attention toward his own music. The 8% self-promotion ratio maintains credibility veneer, but the strategic placement in golden positions and data-driven rotation optimize for his commercial benefit, not listener experience. This isn’t unethical—listeners get functional playlist they enjoy—but it’s not as altruistic as “offering value” framing suggests. It’s value provision and strategic self-promotion simultaneously. The curator-first mindset is permission structure that makes self-promotion socially acceptable by embedding it within larger service.

Methodologically, the playbook’s weakness is reliance on single case study without controls, comparative data, or replication. Every claim draws from Batushi’s experience with one playlist over unspecified timeframe. We don’t know if 2,500 followers represents 6 months or 6 years of effort. We don’t know if 374 listeners is growing, stable, or declining. We don’t know if the golden positions formula works for other curators or just happened to work for Batushi’s specific playlist with his specific music in his specific genre. The “thousands of streams and listener behaviors” he allegedly analyzed remain undetailed. How did he measure skip rates by position when Spotify doesn’t provide that data? How did he control for song quality when testing positions? These methodological questions don’t get asked, much less answered.

The budget allocation reveals another gap. Batushi spends $300 monthly promoting playlist but doesn’t track ROI. If playlist promotion costs $300/month and generates 1,434 streams/month (~$4.30), he’s losing $296/month. The justification must be long-term: building asset (playlist + followers) that eventually generates return. But without growth trajectory data, we don’t know if he’s building toward profitability or just sustaining expensive hobby. Greenwood and Southworth both address profitability explicitly—Southworth shows $300 spend generating $1,100 return over time, Greenwood shows songs generating $5,400/year passive income. Batushi provides no such analysis. The economic model is implied (eventually this scales) but never demonstrated.

The playbook works best as conceptual intervention—the mindset shift from artist to curator is genuinely useful psychological reframe—and worst as tactical manual. The specific positions (2, 4, 6, 9, 20, 28, 48, 85) lack supporting evidence. The rotation system has logical flaw (testing in non-golden positions). The budget is below algorithmic trigger thresholds. The conversion rates and growth curves are undefined. For artist with literally zero budget, building playlist and embedding music is valid tactic. But for artist with $300/month to spend, Southworth’s Facebook ads or Greenwood’s Spotify ads would likely deliver better results faster. The curator approach requires patience Batushi demonstrates but scale he hasn’t achieved.

What makes the playbook valuable despite these limitations is the permission it grants. Many artists feel sleazy promoting their own music—it violates norms around artistic purity and commercialism. The curator frame solves this psychological barrier. You’re not selling out; you’re filling gaps. You’re not promoting yourself; you’re serving your audience. Your music gets heard not because you pushed it but because listeners discovered it naturally within trusted context. This permission structure may unlock productivity for artists paralyzed by promotion anxiety. Even if the specific tactics underperform alternatives, the mindset shift could be worth price of admission.

But the book requires critical reading. Don’t mistake Batushi’s 374 listeners for “built career.” Don’t assume golden positions are proven without demanding proof. Don’t accept that curator approach necessarily outperforms direct promotion—the comparative data suggests otherwise. Do extract the useful core: positioning matters, depth beats breadth, indirect discovery feels better than direct promotion, playlist building is viable long-term asset creation. Then test whether Batushi’s specific implementation (8 positions, 8% ratio, $300 budget, SubmitHub focus) works for your music, or whether you need to adapt the philosophy while discarding the unproven tactics. The curator reframe is real contribution to music marketing discourse. The golden positions formula is speculation dressed as science. Know the difference.

Tags: playlist curation strategy, Spotify playlist positioning tactics, indirect music promotion methodology, streams per listener engagement optimization, curator-first artist mindset