The Month You Owe the Machine

How Spotify's 21-day release window became a filter for who the algorithm rewards — and who it quietly exits

There is a song sitting on a hard drive right now. Someone made it. It is finished. It is good — or good enough, which is the only category that matters once the making is done. And the person who made it cannot release it for a month.

Not because of mastering. Not because the distributor needs processing time. Not because copyright clearance requires it, though copyright clearance is part of it. The month is required because the algorithm needs to learn the song before the song can reach anybody. The machine needs time to read it, map it, contextualize it, and decide where it belongs in the spaces where people might accidentally discover it.

The platform calls this your “release window” and presents it as a service. It is a service. It is also a relationship. And like all relationships, understanding what it actually is changes how you navigate it.

The algorithm is not a meritocracy. It is a scoring system designed to reward the signals it can measure. The release window is where that scoring begins.

What the Month Is Actually For

Spotify processes more than 100,000 new tracks every day. That number is not an achievement the platform brags about. It is a problem the platform has been solving, at scale, since the cost of music production collapsed from $75,000–$150,000 per professional track to something closer to $5 in API credits. The same tools that made it possible to reconstruct a dead man’s voice from family archive tapes and teach it to sing his own theology back to his children — the same tools that recovered lullabies from ethnomusicological fieldnotes and returned them to families who had lost them — also made the content farm possible. The ghost artist catalog. The mood wallpaper. The 75 million tracks Spotify removed in a single twelve-month period under spam and fraud protocols.

Same tools. The content farm and the recovered lullaby are distinguished only by intent. Spotify cannot distinguish them at scale without time. So it built a window.

During those twenty-one to twenty-eight days before a track goes live, the platform’s ingestion pipeline runs three overlapping processes. The copyright verification layer screens for unauthorized samples, AI voice impersonations, and metadata hijacking — where a content farm falsely credits a famous artist as a featured performer to redirect that artist’s followers toward manufactured streams. The holding period is how the platform catches infringing material before it circulates, not after.

Underneath the compliance layer, the audio analysis runs in parallel. Get mapped incorrectly into the recommendation infrastructure, and you are on the platform but invisible to everyone except the people who already know your name. Spotify’s processing framework extracts what the documentation describes as a 42-dimensional vector from every track — danceability, energy, valence, acousticness, instrumentalness, temporal structure. It is not listening for quality. It is listening for category. Where does this song live in the mathematical space of all music? Which candidate pools does it belong to? Which listeners, who have never heard this artist, have behavioral histories suggesting they might lean toward this sound?

By 2025, the mapping extended beyond audio. Large language models now parse an artist’s lyrics, bio, social media presence, and press coverage to build what the platform calls a “semantic embedding” — a cultural context map. A track described everywhere as a breakup anthem gets placed in the emotional territory of breakup anthems before it has accumulated its first thousand streams. The LLM is solving the cold start problem: how do you recommend something for which there is no behavioral history yet?

The month is the answer.

What the Month Reveals

The scoring system rewards the signals it can measure. Here is the most consequential one.

Spotify maintains a separate Artist Popularity Score — a number between 0 and 100 — recalculated every twenty-eight days. This score determines the platform’s “starting traction” for every subsequent release. An artist who releases consistently, every four to six weeks, compounds this score upward. An artist who breaks cadence — who takes a year to make something better, who falls ill, who runs out of money, who had a child — watches the score decay and must rebuild from a lower floor.

This is not a flaw in the system. It is the system working as designed. The platform functions as a continuous publishing operation, and it rewards continuous publishing. A musician who makes twelve tracks a year on a monthly schedule extracts more algorithmic value than a musician who makes three extraordinary ones. This is not the platform failing to recognize quality. It is the platform recognizing something else entirely: engagement velocity, which is a proxy for the kind of catalog-building behavior that keeps listeners on the platform generating data.

The twenty-one-day window is not just a technical requirement. It is a cadence requirement. Are you willing to operate on this infrastructure’s timeline? Are you willing to pitch thirty days out, run pre-save campaigns, build anticipation for something that does not yet exist? Are you willing to be in perpetual pre-production, always working three releases ahead?

Most independent musicians cannot sustain this. The ones who can are often the ones who started with advantages — distribution budgets, team infrastructure, the bandwidth to treat release cadence as a full-time job alongside the making. The release window does not discriminate against any artist by intent. But its structure produces inequity by design, because it requires a level of organizational capacity that correlates with resources that are not evenly distributed.

The month you owe the machine is the month that reveals, clearly, what the machine was built to optimize for. It was not built for the person with a finished song on a hard drive. It was built for the catalog.

Reading the Infrastructure

The Trust Score is x-ray vision for navigating what the window produces. Songs that drop off a playlist in exactly seven days reveal a pay-for-placement model. Songs retained for twenty-eight days or more indicate genuine curation. Genre entropy analysis distinguishes human tastemakers — whose playlists hold 3–6 coherent genres — from bot farms whose lists mix Death Metal and K-Pop in the same queue. Churn, entropy, and engagement patterns are the behavioral traces the infrastructure leaves behind. They can be read.

The Musinique research trilogy — Musical Endogeneity, Musical Imitation Game, Algorithmic Momentum — exists because the platform’s claim to meritocracy deserves scrutiny grounded in data, not grievance. Musical Endogeneity asks whether Spotify’s popularity scores measure organic listener preference or measure themselves: when editorial placement raises a score, which then justifies further placement, the referee is playing the game. Algorithmic Momentum tests whether the score can be manufactured — the Intellijend Strategy claims $300–$500 per release can reach 100,000 streams and a popularity index of 45–55 within twelve months through geographic arbitrage and front-loaded velocity spending — and what happens to the score when the spending stops. If it decays back to baseline, the asset was rented algorithmic position, not a listener relationship.

These are not academic questions. They are the questions an independent artist needs answered before deciding how much of their organization, budget, and attention to hand over to the window.

The Part the Documentation Does Not Say

There is a human curation layer inside the window that works differently from everything described above.

The editorial team — the people who decide what lands on RapCaviar, New Music Friday, Fresh Finds — uses algorithmic triage first. The machine surfaces which of the 100,000 daily uploads meet genre and quality benchmarks, so the humans are not drowning. Then the editors look for the story. Not the audio features. Not the popularity score. The story. The cultural context. Who is this artist? What is this song for? Where does it fit in the larger conversation happening right now?

This is what the algorithm cannot evaluate — and what the editorial layer inside the release window is actually looking for.

Newton Williams Brown exists because a son needed to hear his dead father’s voice sing the theology that sent him unarmed onto a battlefield. The voice was reconstructed from family archive tapes, extended through synthesis into song — and when people who loved William Newton Brown hear it, they go quiet. The amygdala is not confused. It knows the acoustic signature of someone it loved. Tuzi Brown exists because political grief has a specific frequency — minor mode, behind the beat, the Holiday inheritance — and that frequency serves a nervous system in mourning better than any mood playlist assembled from skip rates. These are not algorithm-compatible statements. They are true statements about why music gets made and what it does when it reaches the right person.

The machine cannot evaluate them. The human can.

Respect the technical requirements. Build the metadata carefully. Pitch thirty days out. Run the pre-save campaign. Understand the engagement velocity window and what the algorithm needs from those first seventy-two hours.

And then trust that the song knows something the 42-dimensional vector does not.

If there is a story behind it — a human need it was built to serve, a specificity that no content farm would bother to manufacture — the editorial layer is looking for it. The month you owe the machine is also the month you have to make sure the humans inside the machine know it exists.

The window is the ante. The song is still what matters.

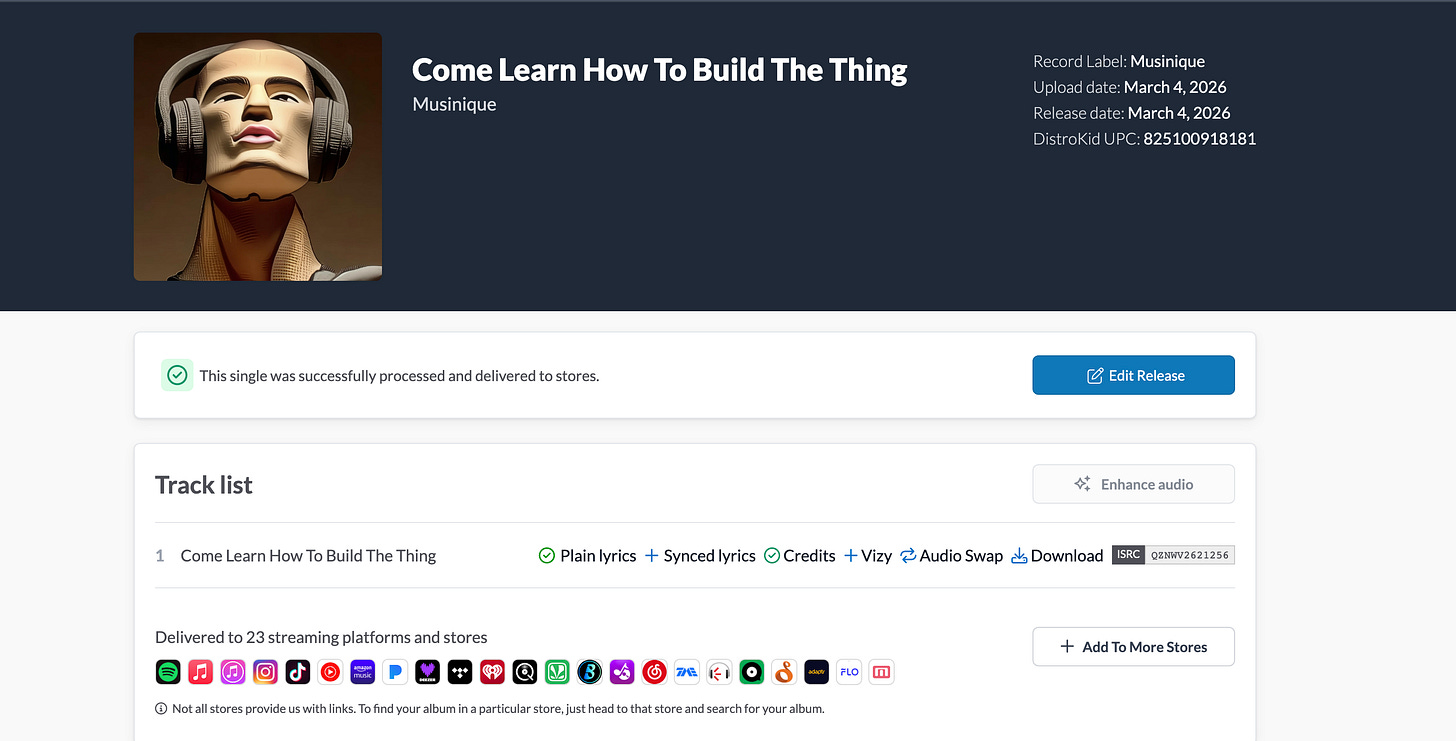

I am the founder of Musinique, which builds tools for independent artists navigating the ecosystem described here. The Indie Curator Intelligence research and the Musical Endogeneity framework live at musinique.substack.com.

Tags: Spotify 21-day release window algorithm, Artist Popularity Score cadence independent musician, editorial curation human review streaming, algorithmic momentum platform equity, Musinique platform critique streaming research

#MusiqueAI #HumansAndAI #AIMusic #IndieMusician #SpiritSongs #LyricalLiteracy #OpenSourceAI #MusicResearch #GhostArtists #AIforHumans