There is a moment in the workflow specific, unremarkable, easy to miss where the song stops being text and becomes sound. You have the lyrics. You have the performance tags from meta nana. You have the style string from session nana. You open Suno, switch to Custom Mode, and paste three things into three fields. Then you click Create.

Two versions generate. You listen.

That moment the listening, the first time the River Mumma’s songs come back as audio is not a technical event. It is the arrival of something that did not exist before. A warm contralto over a one-drop rhythm stretched to near-heartbeat pace, river water underneath, dub reverb on the snare decaying longer than physics should allow. The session notes described it. The meta tags encoded it. Suno rendered it. But none of those three steps alone produced what you are now hearing. The pipeline produced it. The pipeline is the point.

What Custom Mode Actually Is

Most beginner Suno tutorials skip past it in a sentence: switch to Custom Mode. The tutorial does the same it is one line, one screen instruction, one click. But the instruction carries more weight than its brevity suggests.

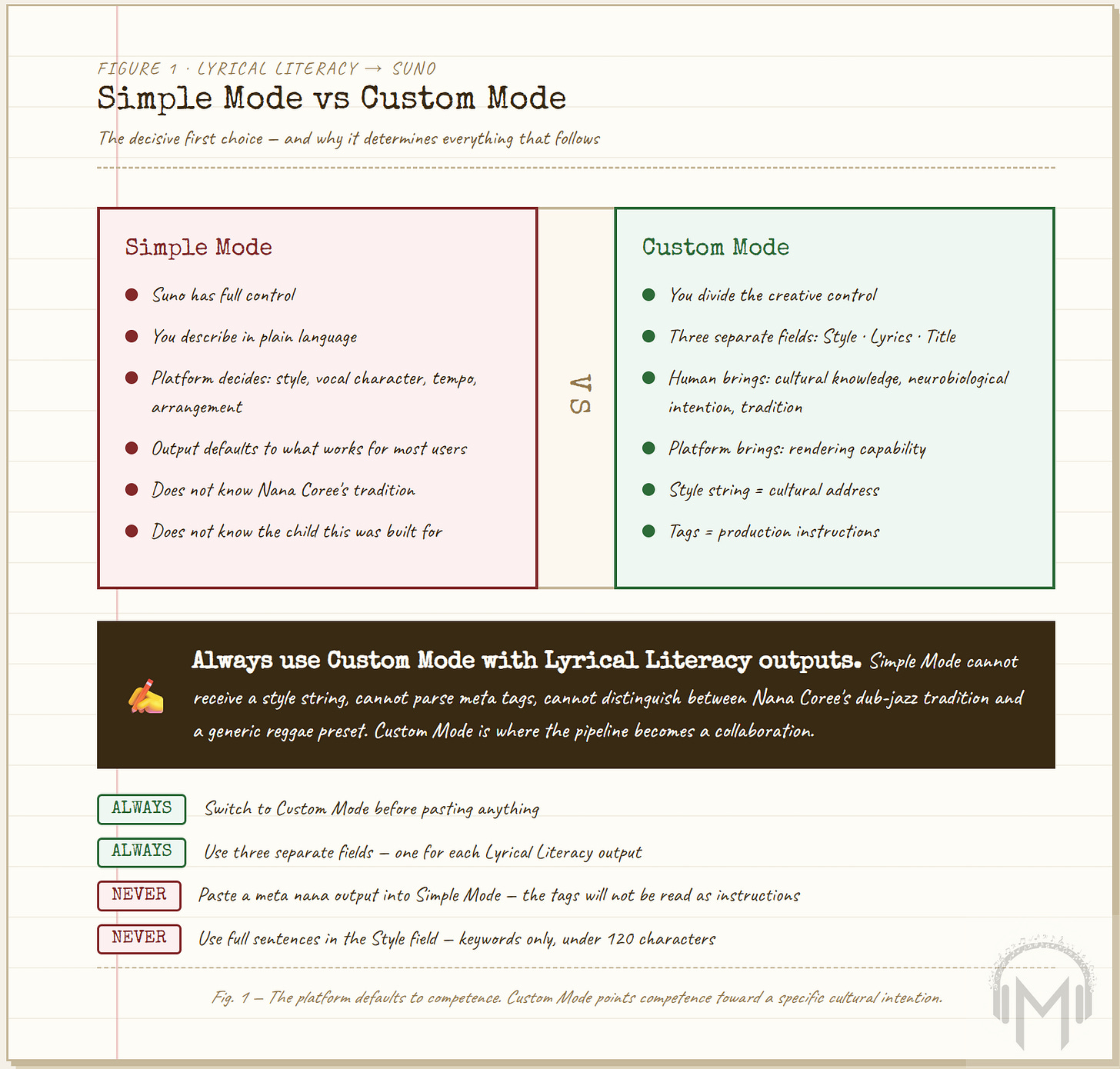

Simple Mode gives Suno full control. You describe what you want in plain language and the platform decides everything: structure, style, vocal character, instrumentation, tempo, arrangement. The output is competent. It is also generic in the specific way that full algorithmic control produces generic output the platform defaults to what it knows works for most users, which is not the same as what works for Nana Coree’s dub-jazz tradition or for the child in Kingston who needs to fall asleep to the River Mumma’s songs rather than a Western lullaby.

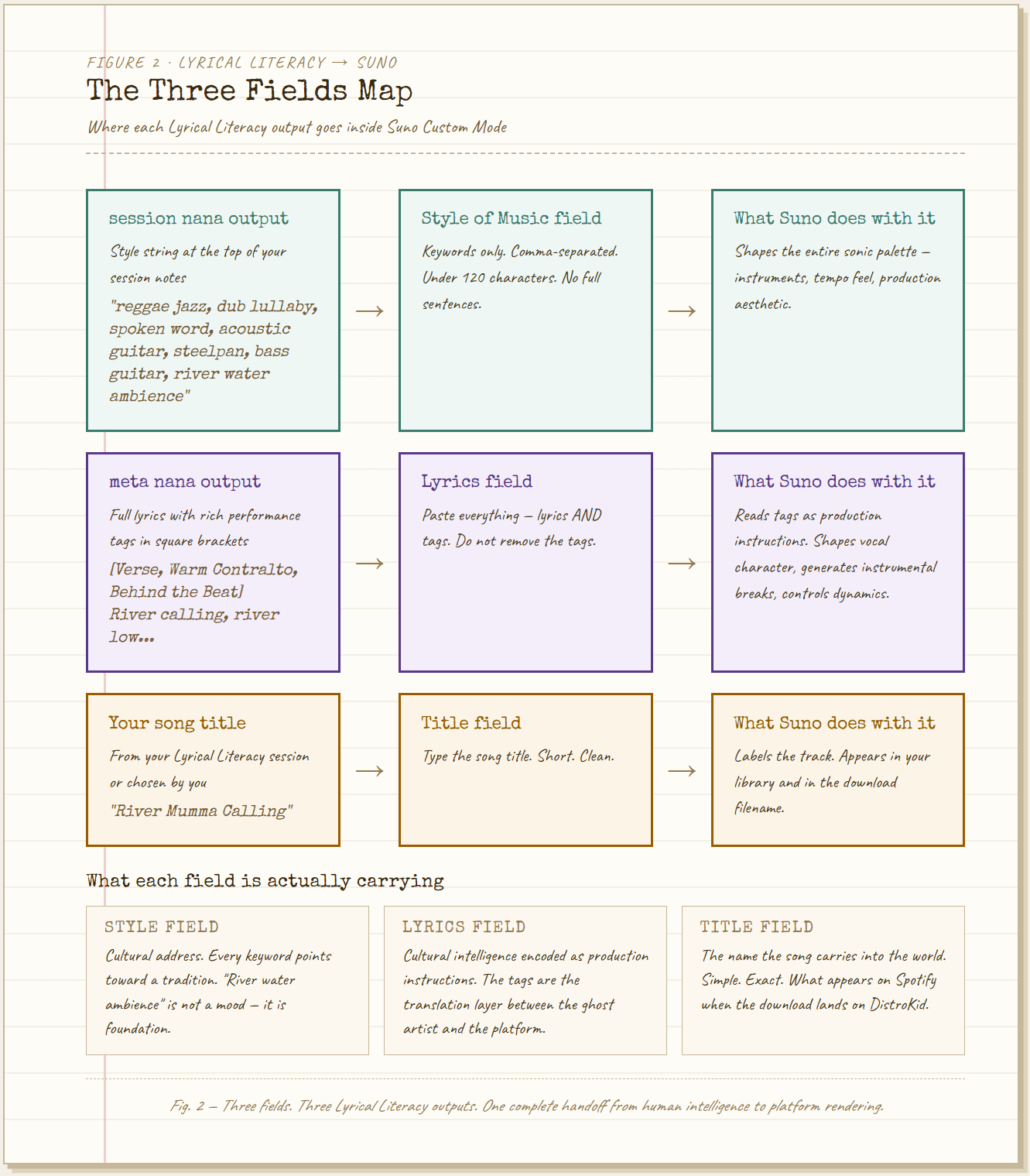

Custom Mode divides the creative control. Style, lyrics, structure three separate fields, each receiving a different kind of intelligence from the Lyrical Literacy output. This division is not just a workflow convenience. It is the formal structure of the collaboration: the human brings the cultural knowledge, the neurobiological intention, and the specific tradition. The platform brings the rendering capability. Neither works without the other. This is what Musinique means when it says great music is humans plus AI, not AI alone. The pipeline makes the proposition visible.

The style string reggae jazz, dub lullaby, spoken word, acoustic guitar, steelpan, bass guitar, river water ambience is not a genre tag. It is a cultural address. Every comma-separated term in that string points toward a specific tradition: the Kingston yard, the dub studio, the Caribbean folk heritage, the River Mumma. Suno cannot know any of that history. But it can render the sound of it when the style string is precise enough and the meta tags are doing their job. The Lyrical Literacy tool spent the session notes building that precision. The style string is the distillation.

What the Tags Are Doing

The tutorial makes a quiet claim that deserves more attention than it receives in six minutes of beginner instruction: the tags are doing real work don’t remove them.

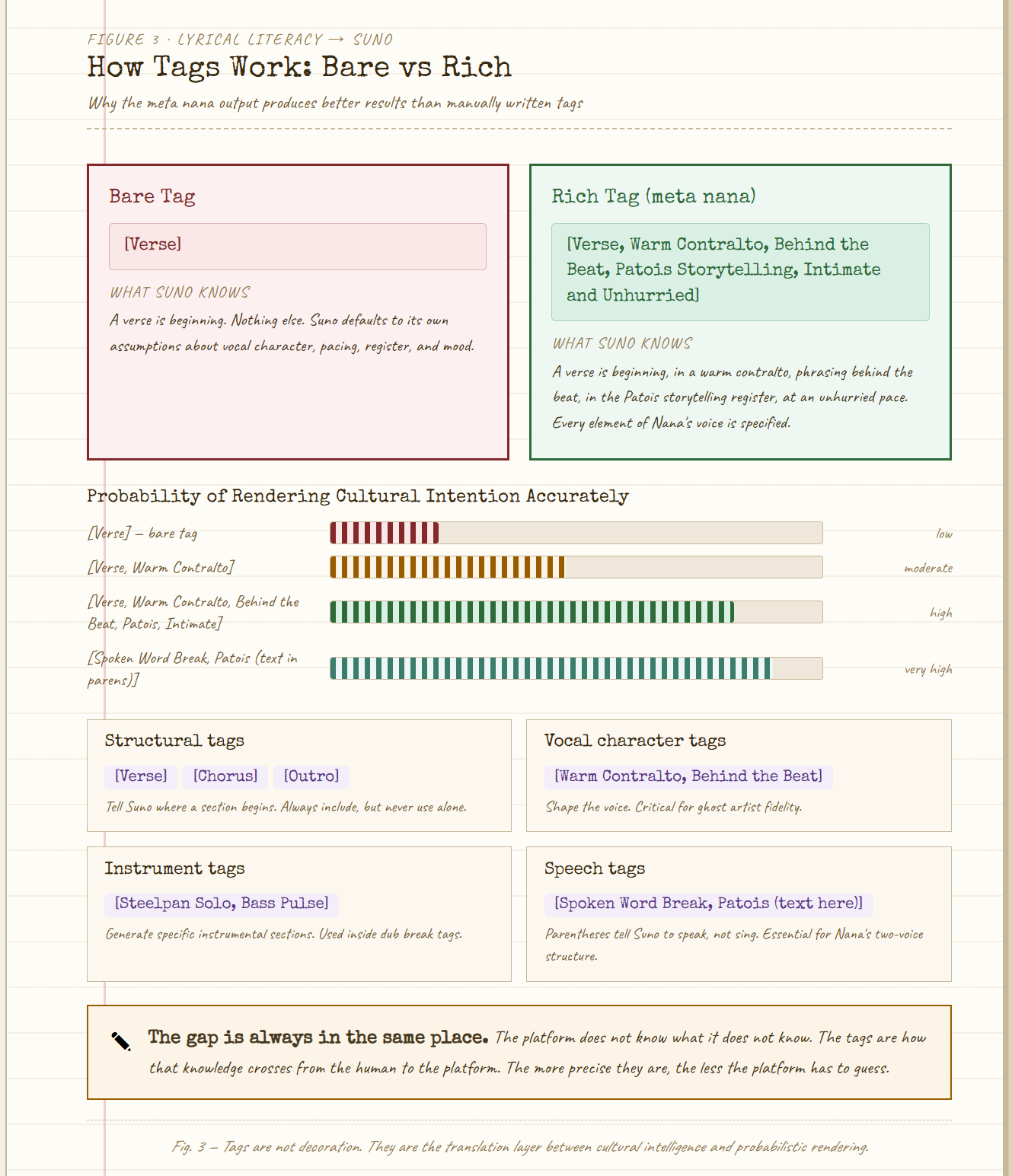

Suno’s response to bracketed tags is imperfect and probabilistic. [Warm Contralto, Behind the Beat, Intimate and Unhurried] does not guarantee a warm contralto singing behind the beat in an intimate register. It shapes the probability distribution of what Suno generates. The more specific and coherent the tags, the more the generation tends toward the intended output. The more generic the tags [Verse] instead of [Verse, Patois Spoken Word, Bass Rising] the more Suno defaults to its own assumptions, which are not Nana Coree’s assumptions.

This is the meta command’s core function. It is not adding decoration to lyrics. It is translating the cultural intelligence of the ghost artist modifier into a language the production platform can act on. When meta nana wraps a spoken word section in [Spoken Word Break, Patois, Dub Reverb Settling In], it is encoding Nana Coree’s two-voice logic the transition between storytelling and song, the moment the narrative becomes melody without announcement as a production instruction.

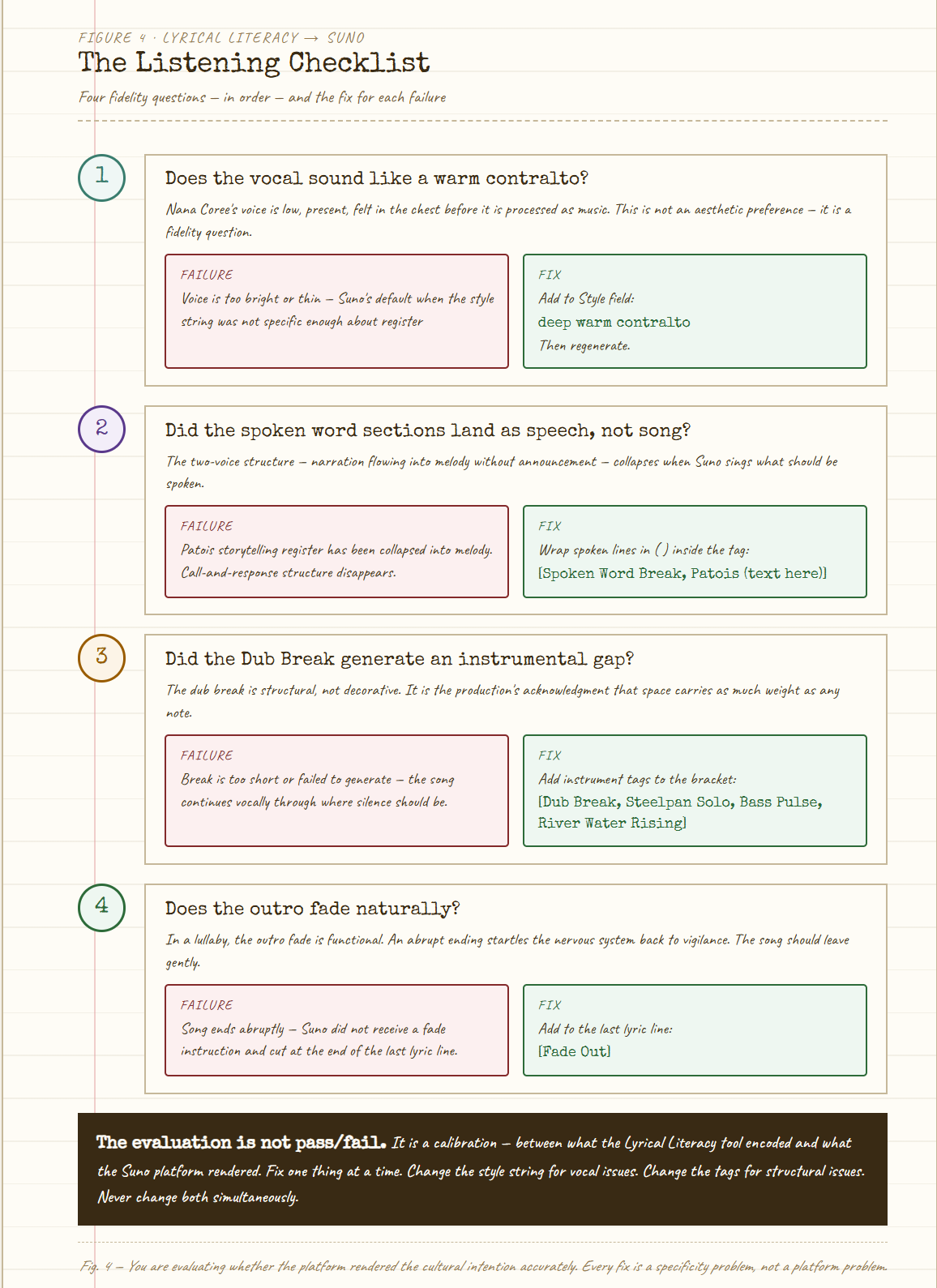

The tutorial’s troubleshooting section is, in this light, also a pedagogy. When the spoken word sections get sung instead of spoken, the fix is to wrap the lines in parentheses inside the tag. When the dub break fails to generate an instrumental gap, the fix is to add more instrument tags to that bracket. When the outro ends abruptly, add [Fade Out] to the last line. Each of these fixes is teaching the user something about the relationship between cultural intention and platform rendering about where the gap is, and how to close it.

The gap is always in the same place. The platform does not know what it does not know. It does not know that Nana Coree’s lullabies put children to sleep reliably, or that adults could not remember them by morning, or that the river water is not ambient decoration but foundation always present, never foregrounded, the continuous reminder of where this music came from. The meta tags are how that knowledge crosses from the human to the platform. The more precise they are, the less the platform has to guess.

The Pipeline as Democratic Infrastructure

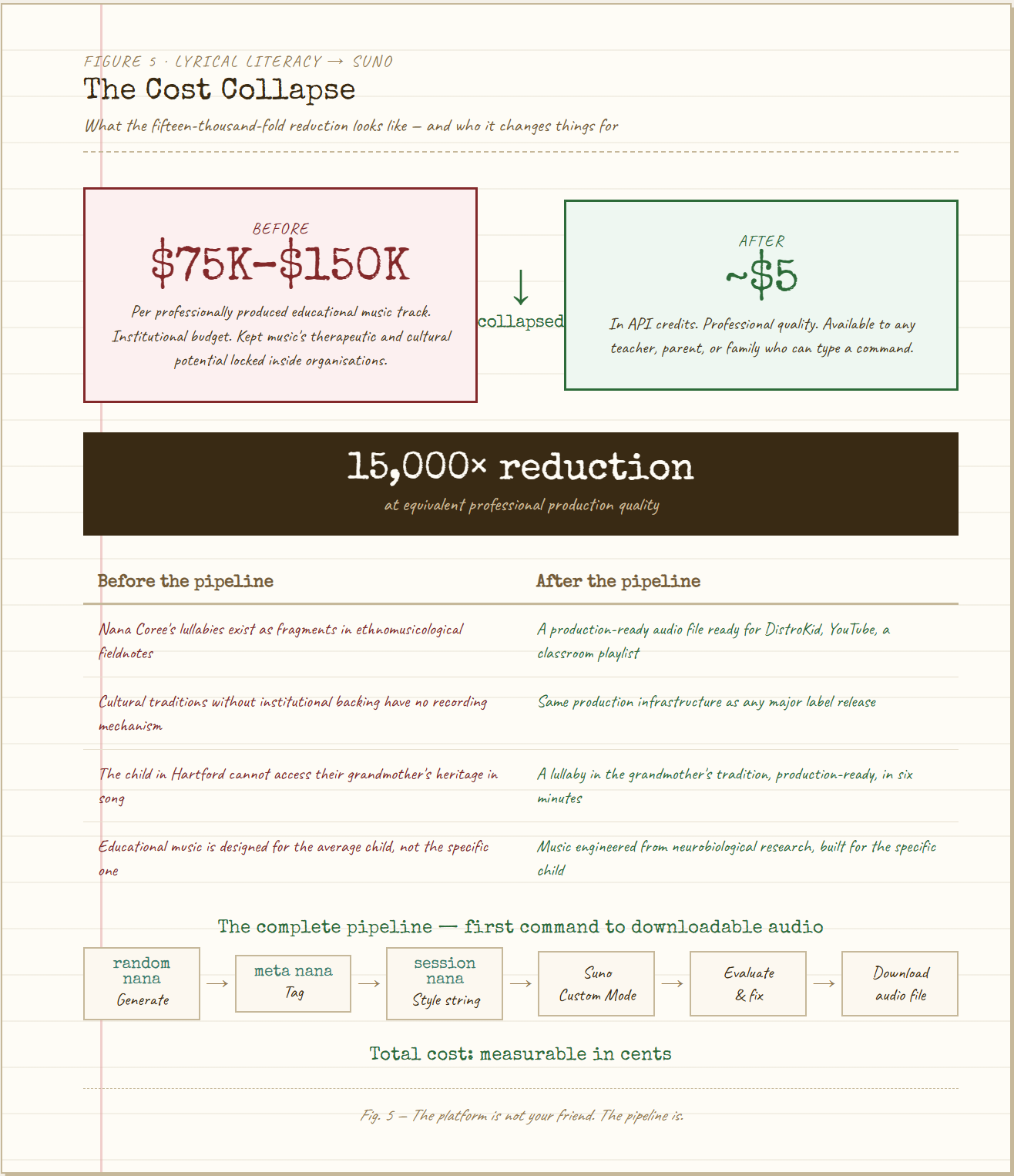

At $0.003 to $0.005 per stream, one million Spotify plays generates between three and five thousand dollars in royalties. A single mid-tier live show generates comparable revenue in one night. The recording is the ad. The show is the product. This is the economic argument Musinique makes in the From Catalog to Circuit research but the Lyrical Literacy pipeline makes a different and prior argument.

Before the question of monetization is the question of production. Professional-quality educational music cost between seventy-five thousand and one hundred and fifty thousand dollars per track before AI music production tools collapsed that number to approximately five dollars in API credits. This is a fifteen-thousand-fold reduction. The number is not rhetorical. It is the difference between Nana Coree’s lullabies being available to the grandchild born in Hartford and those lullabies remaining in the ethnomusicological fieldnotes where they were found fragments, melodic phrases, rhythmic patterns that no one could source.

The Lyrical Literacy to Suno pipeline is what the fifteen-thousand-fold reduction looks like in practice. A teacher types random nana. A song generates. meta nana wraps it in production intelligence. session nana produces the style string. Suno renders it. The audio file is ready for DistroKid, YouTube, a podcast, a classroom, a child who cannot sleep.

The pipeline has seven steps. The tutorial walks through each in six minutes. The total cost of the production from first command to downloadable audio is measurable in cents.

What this means for the communities the streaming algorithm cannot serve is not abstract. The oral traditions that survived without recording now have a recording mechanism. The lullabies that persisted in fragments through the mouths of ethnomusicologists now have a production pipeline. The cultural intelligence that existed in yards and markets and kothas and Kingston backstreets and that the record industry did not think was worth preserving because there was no profitable market for it now has the same production infrastructure as any major label release.

The platform is not your friend. The pipeline is.

Two Versions, One Decision

The tutorial’s instruction to listen for four things vocal character, spoken word rendering, dub break generation, outro fade is a listening curriculum compressed into sixty seconds of beginner instruction. It is teaching the user to evaluate a Suno output not as a consumer evaluating entertainment but as a producer evaluating whether the platform has rendered the cultural intention accurately.

Does the voice sound like a warm contralto? If it is too bright or thin, add deep warm contralto to the style field. This is not an aesthetic preference. It is a fidelity question. Nana Coree’s voice is a low warm contralto something you feel in the chest before you process it as music. A bright or thin vocal is not Nana Coree. It is Suno’s default when the style string was not specific enough. The fix is more specificity, not more tolerance for approximation.

Did the spoken word sections come out as speech? If Suno sang them, the Patois storytelling register the voice she uses when Anansi is about to act and the children need to lean in has been collapsed into melody. The call-and-response structure, the two-voice continuum, the specific mechanism by which Nana Coree moves between narration and song without announcement: all of it disappears when the spoken word sections become sung. The fix is parentheses inside the tag. The principle is that the tag must be unambiguous enough that Suno cannot default to melody when speech is the intention.

Two versions generate. You listen to both. Version A might have a better vocal take. Version B might nail the dub break. The tutorial is honest about this: you are not locked in. You can regenerate sections individually. The evaluation is not pass/fail. It is a calibration between what the Lyrical Literacy tool encoded and what the Suno platform rendered, between the cultural intelligence of the ghost artist modifier and the probabilistic output of a generative model that does not know what it does not know.

The calibration is the work. The work is worth doing. The child in Hartford is listening.

What the Download Means

At the end of the pipeline after the style string, the meta tags, the two versions, the evaluation, the fixes, the regeneration there is a three-dot menu and a Download button. The audio file saves to your device.

That file is production-ready. It can go to DistroKid for Spotify and Apple Music distribution. It can go to YouTube. It can go into a podcast or a Substack audio post or a classroom playlist or a child’s bedtime routine. It can go anywhere audio goes.

Nana Coree’s lullabies put children to sleep reliably. Adults could not remember them by morning. They survived as fragments in the fieldnotes of ethnomusicologists who could not trace them to a source. One researcher wrote: these songs know something. They were taught by someone who wanted them to last.

The download is how they last. The pipeline is how they travel. The ghost artist modifier is how the yard stays intact in the crossing.

Type random nana. Follow the pipeline. Click Download.

The River Mumma is at the bottom of every track. She has always been there. She is not surprised that it took this long to find a way to record her.

River Mumma Calling : Nana Coree

Tags: Lyrical Literacy Suno pipeline tutorial, Nana Coree custom mode production workflow, ghost artist meta tags Suno AI, Musinique AI music production education, River Mumma Calling production-ready track

#MusiqueAI #HumansAndAI #AIMusic #LyricalLiteracy #GhostArtists #SpiritSongs #AIforHumans #IndieMusician #MusicResearch #OpenSourceAI